Google robot teaches itself to walk without human help in just a few hours

AI system teaches itself to walk on three different terrains

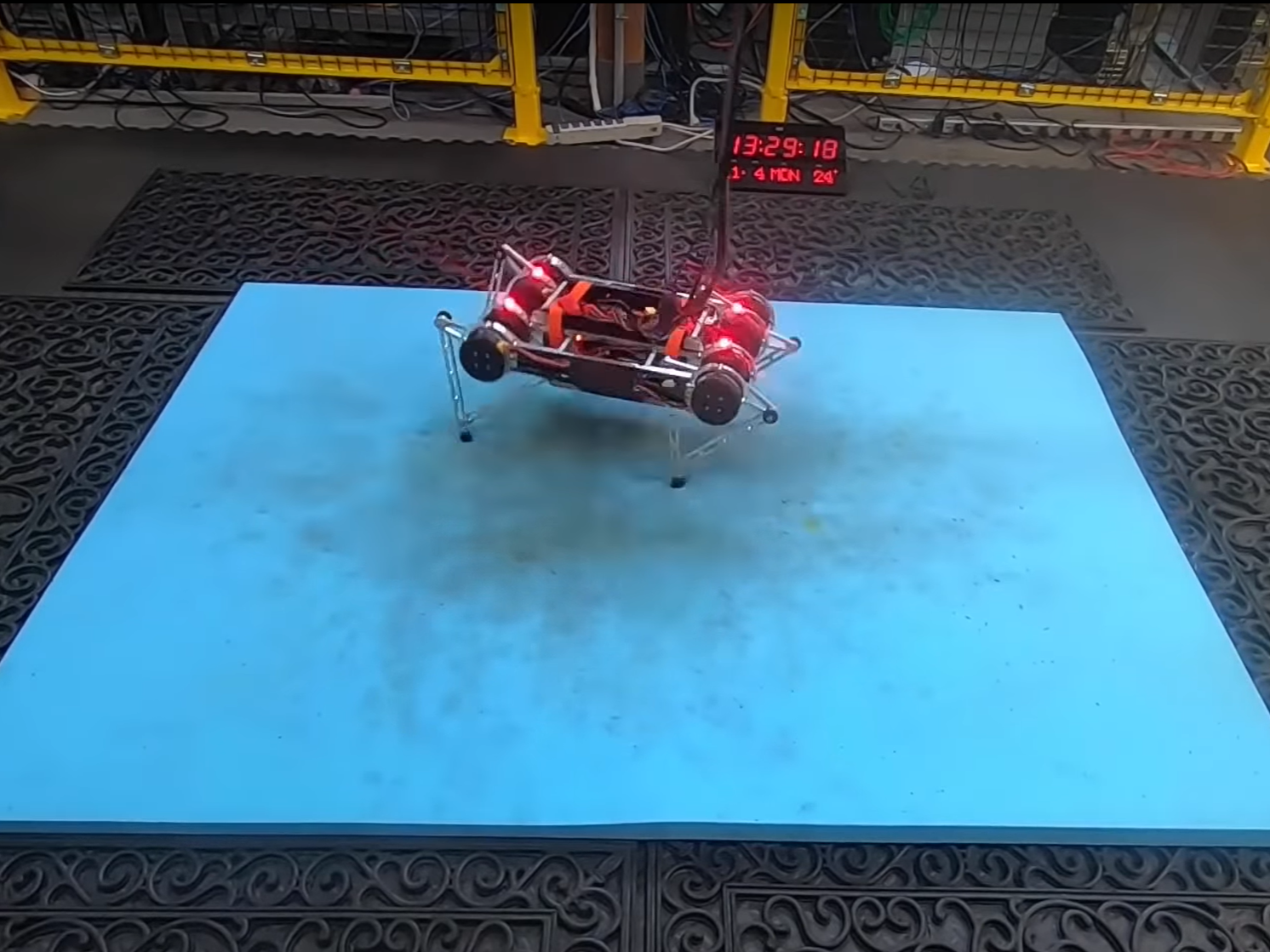

Google researchers have built a four-legged robot capable of figuring out how to walk without needing any assistance from humans.

The team from Google's Robotics division and the Georgia Institute of Technology used an artificial intelligence technique called deep reinforcement learning, which was programmed on the task of learning to walk.

The AI system was capable of teaching itself to walk on three different terrains: Flat ground, a soft mattress, and a doormat with crevices.

"Our system can learn to walk on these terrains in just a few hours, with minimal human effort, and acquire distinct and specialised gaits for each one," states a paper detailing the system.

The robot started by rocking backwards and forwards, before using trial and error to understand that it can propel itself forward by bending its legs in the correct sequence.

Another algorithm was used to help the robot stand back up whenever it fell over.

Within a few hours of starting out, the robot was able to reliably walk across all three terrains without falling over. Once it had learned to walk, the researchers were able to plug in a game console controller and take control of the robot and manoeuvre it forwards, backwards, left and right using the movements it has learnt.

The main benefit of a robot not requiring manual input is that the AI framework can learn how to walk on a huge range of surfaces without the need to program each necessary gait individually.

The researchers hope to now test the learning technique on robots in a variety of other situations and improve the system so that it can be used outside the lab in real-world conditions.

Reinforcement learning has previously been used to teach robots a variety of skills, such as learning to play Jenga and video games.

Last year, a team from the Computational Robotics Lab at ETH Zurich demonstrated a 3D-printed robot capable of autonomously teaching itself how to ice skate.

Join our commenting forum

Join thought-provoking conversations, follow other Independent readers and see their replies

Comments

Bookmark popover

Removed from bookmarks